The enormous development that the technology associated with Artificial Intelligence (AI) is experiencing is giving rise in recent times to new tools and spectacular applications.

One of the areas where advances have been most notable is the recognition of images, in part thanks to the development of new Deep Learning techniques. Nowadays we have at our fingertips more accurate systems than humans themselves in the tasks of classification and detection in images.

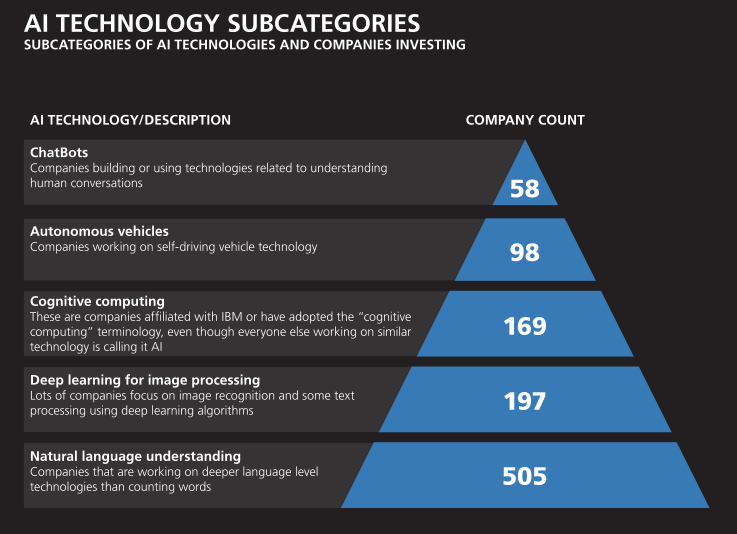

According to a recent study by O’Reilly regarding the AI market, image recognition is one of the areas where more companies are investing in the US within the investment in AI.

[caption id="" align="aligncenter" width="737"]

The New Artificial Intelligence Market. O’Reilly. Aman Naimat[/caption]

The use cases are many and in diverse industries and sectors, some interesting examples would be the following:

- Tagging of images: extracting tags or keywords associated with images, in order to classify or search afterwards. Multiple applications in the tourism or retail sectors.

- Verification of users based on face: security, authentication, profiling / segmentation of customers, identification in physical stores.

- Analysis of opinion: detection of the feeling or the shopping experience in physical stores.

- Client analysis: to know the user better through the detection of logos or text in the products they consume.

- Diagnosis of diseases: diagnostic imaging based on comparison with previous diagnoses. Retinopathies, diabetes, medical image...

- Augmented reality: gaming, virtual catalog, advanced interaction with the medium ...

- Detection of license plates: security, segmentation, identification ...

How to carry out projects that integrate image recognition

One of the most complex tasks when dealing with projects that integrate advanced AI techniques is the development of the models and their production.

In the case of image recognition techniques, a very interesting alternative is to rely on the pre-trained models that the main cloud platforms offer us.

This way, we will significantly limit the necessary efforts of very required profiles and will speed up the production of our services or applications.

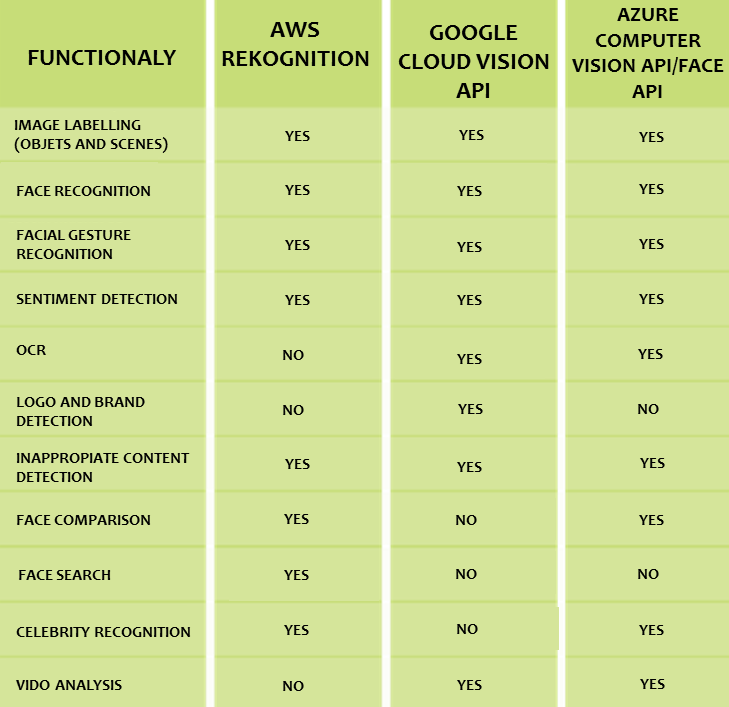

However, the image recognition services offered by each provider differ in functionality and characteristics, which makes it necessary to carry out a preliminary analysis to choose the solution that best suits our scenarios or projects.

In this article we will make a small comparison taking into account the characteristics (at the end of 2017) of three of these services in the cloud, analyzing the pros and cons of each one.

Comparison of cloud services

We will analyze the services offered by AWS, Google Cloud and Microsoft Azure. First of all, we will analyze which desirable functionalities are covered and which are not in each platform:

It is necessary to clarify that we must not only look at whether a functionality is available or not, but also how precise is the model behind it, that is, how well the service works in a given case.

This may depend on our context and the type of images we will use, with factors such as the quality of the image that can greatly affect the accuracy of the results.

On the other hand, we must take into account other factors such as integration with other tools and services in the cloud, API limitations and costs.

Comparative example with the same photo

To draw a conclusion about the quality of one service or another is complicated. However, we can do the exercise of sending the same image to the three platforms and compare the results we obtain.

This way we can get a better idea of the results obtained under equal conditions.

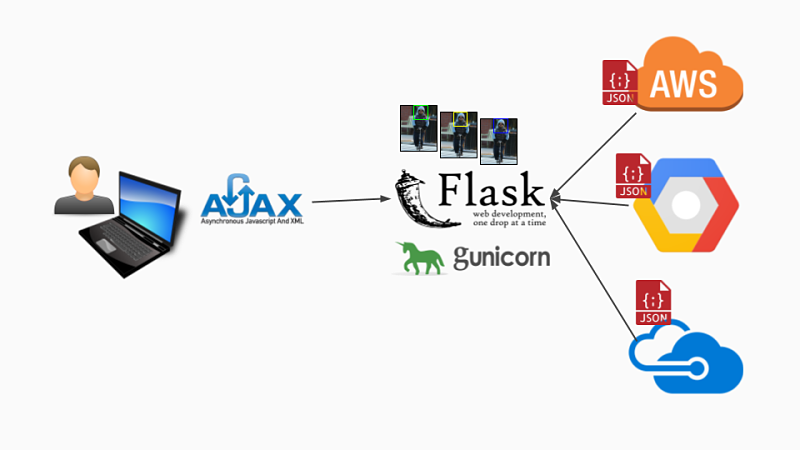

To carry out this exercise we have developed a small application made with Python and Flask that, when given an image, sends it to the three suppliers and shows us the result, in this case, of the labelling and face detection functionality.

This application is available on Github and is a good example of how we can invoke the three services from the different SDKs that are provided.

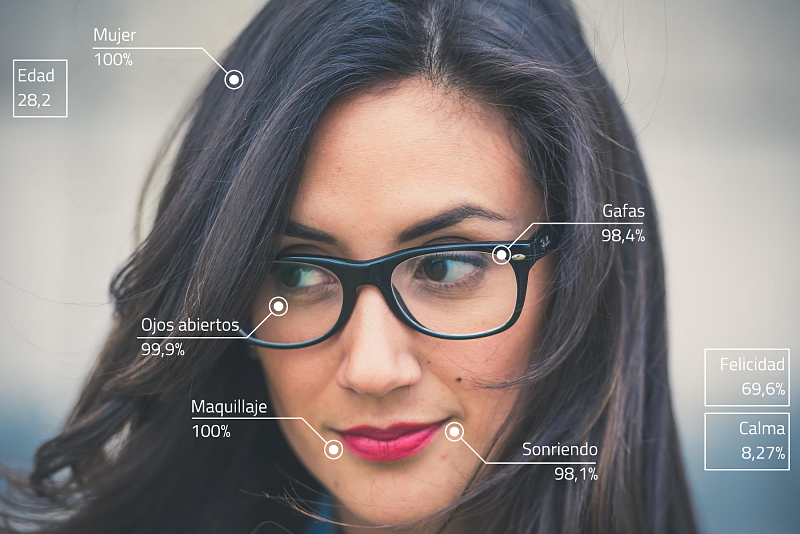

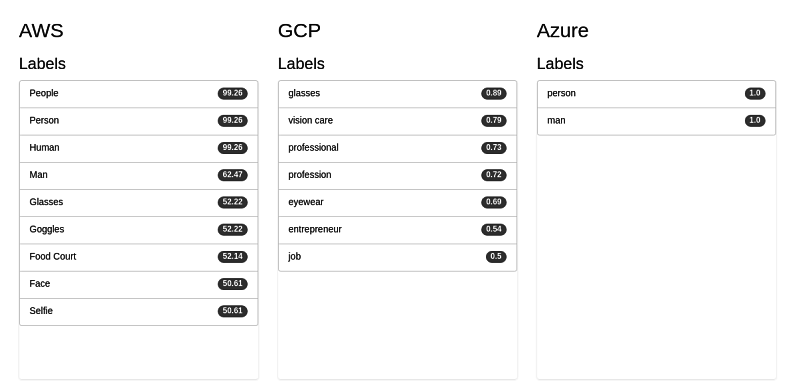

In this case we have used a photo of a colleague from Paradigma. In the first place, we see what tyoe of labeling of the photo each platform does and with what certainty. As we can see, the number of labels that we get and their accuracy varies a lot. We must bear in mind that the certainty in the case of AWS is in the range of 0-100 and in case of Google Cloud and Azure between 0-1:

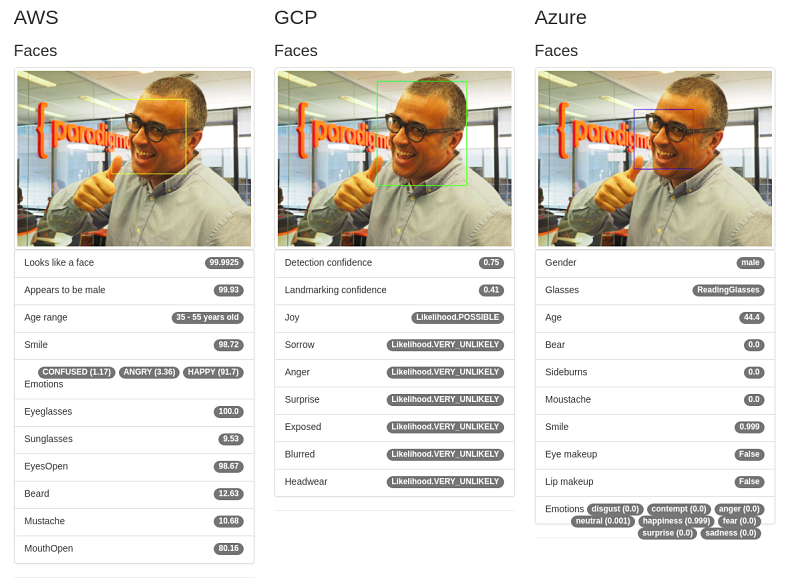

On the other hand, we can see where the face is detected inside the image, boxed in each case with a different color. We also see other data related to the analysis of feeling, age, gender, glasses and other attributes. As we can see each service offers different properties.

Keep in mind that, in this case, we have used only a subset of the three APIs in order to make the comparison as equal as possible. The three APIs have more features that we will not analyze in this article, for example the location in the image of facial features (eyes, mouth, nose ...).

Conclusion

In summary, the vision or image recognition APIs are already mature enough to be incorporated into projects in production. There are still notable differences between some providers and others in terms of the functionalities offered and the accuracy of the models, but the services are evolving very rapidly.

In this article we have analyzed three platforms, but there are more. We must carefully select which service is best for our use case and keep in mind that we are in the midst of a race of the big cloud platforms in offering better services at a lower cost. Undoubtedly, this is an advantage for developers and companies that want to exploit this technology in their products.

In conclusion, we can say that Artificial Intelligence As A Service is a very good alternative to introduce ourselves in these latest generation technologies without the complexity and knowledge necessary to train and productivize our own model based on Machine Learning or Deep Learning techniques. The age of AI is here to stay.

Comments are moderated and will only be visible if they add to the discussion in a constructive way. If you disagree with a point, please, be polite.

Tell us what you think.