In the previous post, we were overwhelmed by the sheer number of ticketing tools, quality issues, and bottlenecks when trying to bring everything into production. We analyzed how the gap between a successful PoC and industrialized production is real — and very costly.

The good news is that the industry has already found the solution, and it’s not something invented overnight. The answer, as we have been advocating, lies in applying the most rigorous discipline we have: Platform Engineering. The days of “testing with soda” (the AI preparation phase) are over — now it’s time for the application of AI in production, at scale, with responsibility and speed.

The need for an AI platform is not just a trend — it is the AI-native infrastructure that leading companies, including some of our clients, are already building in the market today.

The industry is already there, and there are reference solutions

The truth is, we don’t need to be guinea pigs. The heavyweights are already clearly stating what we are seeing: AI needs Platform Engineering.

Google and Thoughtworks are just two examples of how the industry’s vision is converging. The message is clear: if you want to scale MLOps consistently, you need Golden Paths and the abstraction that only Platform Engineering can provide. It’s not just about having more GPUs — it’s about making those GPUs consumable without a 300-page manual.

What does this mean? It means that the goal is not just to support current models, but to create AI-native infrastructure. In other words, a platform designed from the ground up to orchestrate the complexity of AI agents, vector data, and the MLOps lifecycle. We are talking about a platform where automation and intelligence are built in.

If industry leaders have already validated this blueprint, the question is not if we should build it, but when we start.

An AI platform is rarely built from scratch using a single product. It is based on an intelligent orchestration of components that reduce friction and provide flexibility. For teams starting this journey, it is crucial to identify the stack that will serve as the foundation. An example of an industrialized Golden Path includes tools such as:

- Pipeline orchestration: Kubeflow or MLflow, to standardize workflows (training, packaging, and model deployment).

- Training data management (Feature Store): tools like Feast, ensuring data consistency between training (offline) and prediction (online).

- Model serving and MLOps core: using Kubernetes for deployment is just the beginning. Specialized solutions like KServe help manage scaling and model monitoring complexity.

The key is not adopting all these tools, but ensuring that the AI Platform abstracts them, so Data Scientists only interact with the simplified Golden Path we define.

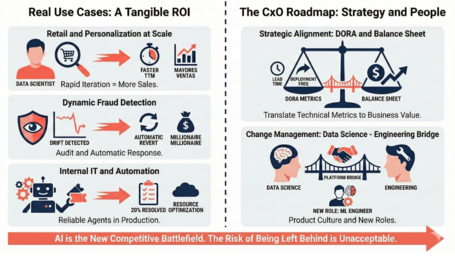

Real use cases: tangible ROI

CxOs love the word ROI. How do we evangelize the value of this platform? Simple: with facts — showing where self-service and traceability generate revenue or mitigate risk.

Let’s ground this with three examples where the AI Platform provides the strongest foundation:

- Retail and personalization at scale

A platform that enables deploying a recommendation engine for each customer segment (or even each individual) in a matter of hours. The advantage: if Data Scientists can iterate on recommendations ten times faster thanks to an automated Golden Path, the company sells more. The business focus is on improving the algorithm’s Time-to-Market.

- Dynamic fraud detection

Risk analysis. Here, the AI platform not only deploys the detection model but also ensures auditability and real-time monitoring of model drift. If the model starts to fail, the platform detects it and automatically rolls it back, protecting the company from multimillion losses or regulatory failures.

- Internal IT and automation

We can use the AI platform to enable Data Scientists to build internal AI agents that automate support ticket management or optimize cloud resources. Moving from “we have an AI agent in testing” to “the agent resolves 20% of L1 incidents” is only possible with a platform that reliably manages its lifecycle and state.

There are countless use cases. As experts in your vertical and business domain, you will surely identify many more opportunities to capitalize on.

The CxO roadmap: beyond code and technology

This is where the CxO shifts into strategic consultant mode. Technology is the how, but strategy and people are the what. We cannot talk about an AI Platform without addressing change management.

Strategic alignment: connecting DORA to the balance sheet

We need to translate Feature Store jargon into business language — the language of those who unlock investment.

Using principles from our Platform Engineering series:

- Business KPIs and MLOps metrics: demonstrating that reducing Lead Time for Changes (a DORA metric we should use to measure the platform) directly translates into faster product launches and reduced risk. This is the only way to justify the investment with evidence.

Additionally, for AI and models, we can introduce specific metrics:

- PoC-to-Prod Ratio: the percentage of Proofs of Concept that reach production within 90 days. The platform should increase this ratio from the current 10–20% to over 70%.

- Model Lift: measuring the incremental improvement in business metrics (e.g., conversion increase, fraud reduction) before and after deploying the model through the platform.

- MLOps TCO Reduction: quantifying operational cost savings achieved through self-service and automation, eliminating dependency on TicketOps and manual infrastructure configuration.

Change management: bridging Data Science and engineering

This is probably the hardest part. We need to reconcile and empower both worlds:

- Data Scientists are not DevOps — and they shouldn’t be. That’s why the platform is essential as a bridge. We must establish an internal product culture: the platform team provides services and capabilities, and the Data Science team is the customer.

- Training and new roles: invest in developing Machine Learning Engineers, the glue between Data Scientists and Platform Engineers.

The first step is technological abstraction and addressing real business needs

The industry is doing it. At Paradigma, we are already doing it. We see that the benefits are tangible, and above all, we see that the risk of falling behind is unacceptable. AI is the new competitive battlefield.

So, where do we start?

My recommendation for all C-level executives, decision-makers, and business unit leaders responsible for technology decisions is simple: start with the highest-friction components — those that consume the most effort without directly impacting the business.

Focus on what is most valuable to optimize and industrialize, and what accelerates the journey from idea to production.

- Do not try to build the entire IDP at once. That would be a mistake. Be pragmatic.

- Start with capabilities like a Feature Store to solve data quality issues, or a Model Registry to address traceability and governance of model usage within your organization.

These are just a couple of examples, but those familiar with the process and its pain points will be able to identify “small wins” that gradually build momentum and shape the AI Platform.

Any effort that reduces manual self-service and increases standardization is paving the way toward that inevitable AI Platform. The platform is not built in a day — it is a journey. The best way to evangelize and generate traction is to demonstrate value through small but solid Golden Paths.

I hope this series of posts has sparked your curiosity about why this topic is so important, provided strategic justification through real-world challenges, and most importantly, given you ideas on where to start building a practical roadmap that delivers value and helps lead the AI conversation in your organization.

If you’d like to revisit the previous two posts in the series, here they are:

Comments are moderated and will only be visible if they add to the discussion in a constructive way. If you disagree with a point, please, be polite.

Tell us what you think.