Single-Threading Can Be Fast

Despite using a single-threaded implementation, Redis, the high-performance in-memory key-value storage system, is known for its incredible speed.

How is it possible for Redis to handle hundreds of thousands of requests per second? First of all, it is important to clarify that Redis does use multiple threads; it is not a strictly single-threaded system.

Although it is true that it maintains a thread responsible for processing client requests and handling data structures, Redis uses other background threads to execute additional tasks required for its operation.

So why is it so fast?

- Redis keeps all data in memory, enabling extremely fast access.

- The use of a simple key-value data model allows for O complexity in key lookups.

- The use of I/O multiplexing with non-blocking I/O enables efficient handling of multiple I/O operations.

- Use of simple commands that require minimal CPU usage.

- Use of optimized data types for in-memory operations.

Multithreading Optimizations

- Asynchronous Memory Release: starting with Redis 4.0, the lazy-free mechanism was introduced to release memory asynchronously. Memory release when deleting large keys is handled in a background thread.

- Multi-threading: as stated on the Redis blog, “starting from version 6.0, Redis uses I/O threads to manage client requests, including socket reads and writes, as well as command parsing. However, the implementation does not fully leverage the potential performance benefits.”

The use of multithreaded execution helps reduce the pressure on the single-threaded execution of incoming requests in high-concurrency scenarios. This multithreading process only applies to the parsing of the request protocol, while command processing and data manipulation remain single-threaded.

With the release of Redis 8.0, a new multithreaded I/O implementation arrived, promising major improvements in data transfer (between 37% and 112%, according to the data published by Redis) on multi-core CPUs.

Testing the New Multithreaded Implementation

As we already mentioned, Redis processes requests in a single thread, dividing execution into the following 4 steps:

- Reading the request from the socket

- Parsing the request

- Processing the request by executing the required operations on the data

- Writing the response to the socket

A new request will not begin processing until these 4 steps have been completed sequentially for the current request.

Writing results to the socket in step 4 is typically a slow operation in terms of completion time. This is where we can benefit from the new multithreaded I/O implementation by configuring Redis to execute I/O operations in a separate thread. In this way, Redis is able to begin processing a new request in parallel, thus improving performance.

The Redis io-threads property (which is immutable) is the setting we will use to configure multithreaded socket writes. Remember that this property defaults to 1 and is used to indicate the maximum number of threads that can be created for socket writes.

Redis also provides the io-threads-do-reads configuration property, which enables multithreaded execution for reading and parsing requests from the socket (steps 1 and 2 described above). However, according to Redis documentation, this option does not have a significant impact on performance.

Therefore, we will focus solely on the impact of the io-threads configuration.

Synthetic Tests: How Were They Executed?

Benchmarking Tool

For the tests, we used memtier_benchmark, an Open Source benchmarking tool developed by Redis Labs and integrated into their development processes, mainly for non-regression testing and performance optimization.

memtier_benchmark was introduced in the official Redis blog and the project is available on GitHub.

Our Redis Test Environment

We are going to use a Redis cluster deployed on OpenShift Container Platform (OCP) with the following configuration:

- Cluster nodes: 5

- CPUs per node: 4

- Memory per node: 4Gi

We will use 4 different images to build the nodes, allowing us to compare performance across versions:

- redis.redis-stack-server:7.2.0-v10 (image based on the official redis-stack-server image with some modules enabled)

- redis:7-bookworm (official image)

- valkey/valkey:8-bookworm (official image)

- redis:8-bookworm (official image)

Test Objectives

With these tests, we aim to:

- Determine the optimal value for the io-threads configuration parameter.

- Decide which Redis flavor we should choose: the old and well-known Redis or the fresh and young Valkey.

As we have seen, we can set any value above the default value of one thread for the io-threads parameter. We are interested in understanding the performance impact of changing this configuration.

On the other hand, we know that after Redis announced it would no longer be open source, several forks of the project emerged. After reviewing those with the highest activity and contributor growth, we decided to limit our tests to Valkey.

We are also interested in comparing Valkey’s performance against the latest Redis versions. Redis, in particular, has received very positive feedback regarding performance improvements since the release of version 8.

Test Results

Each test begins with a freshly created Redis cluster in a dedicated namespace within our OCP environment. Likewise, a new pod is created from which the benchmarking tool is executed at the start of each test.

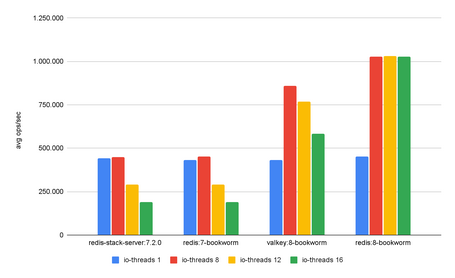

The io-threads values used were: 1, 8, 12, and 16.

The following table summarizes the results obtained in the different tests, showing the average number of operations per second for each image and io-threads configuration.

| io-threads | 1 | 8 | 12 | 16 |

|---|---|---|---|---|

| redis-stack-server:7.2.0 | 441.316 | 446.944 | 290.793 | 190.601 |

| redis:7-bookworm | 432.611 | 451.702 | 289.670 | 188.995 |

| valkey:8-bookworm | 431.714 | 860.290 | 766.879 | 584.707 |

| redis:8-bookworm | 453.247 | 1.027.698 | 1.030.549 | 1.025.972 |

The same data represented as a chart:

It seems that the optimal point is reached with an io-threads configuration equal to 2 times the number of CPUs configured per Redis node.

Beyond this value, performance degradation can be observed in Valkey 8, while Redis 8 maintains stable performance even when exceeding this optimal point. Therefore, Redis 8 results are more consistent.

As expected, no performance improvement was observed in Redis 7 when increasing io-threads. On the contrary, we were surprised to observe performance degradation.

Redis 8 outperformed Valkey 8 in all scenarios. Therefore, we settled on the 2xCPU rule as the default io-threads configuration, and for now, the balance clearly favors Redis 8 as the image to use for our Redis clusters.

Applying the Knowledge Gained: Resource Optimization in a Real Scenario

The scenario: an application deployed on Kubernetes, making intensive use of a Redis cluster and requiring extremely high request-per-second rates.

The Redis cluster, during peak usage periods, must scale to 100 nodes in order to support the required performance. Currently, this cluster is made up of nodes instantiated from the Redis Stack 7.2 image, with 1 CPU per node.

Our goal is to reduce the number of cluster nodes by half, moving from 100 to 50 nodes using the Redis 8 image. To achieve this, we doubled the number of CPUs per node from 1 to 2 and set the io-threads configuration to 4, as previously determined, in order to benefit from the improvements offered by the new I/O threading implementation.

To validate that the platform with this new configuration is capable of supporting peak usage periods, we used a set of complex tests specifically designed to subject the platform to workloads similar to those expected during those peak periods, thus validating its ability to withstand extreme demand.

These End-to-End (E2E) tests were custom-developed using Spring Framework and the Redis Lettuce client.

Taking advantage of the platform’s maintenance window, the full test suite was executed using the following configurations in order to compare the results:

- Redis Stack 7.2 - 100 nodes (1 CPU)

- Redis 8 - 50 nodes (2 CPU)

- Valkey 8 - 50 nodes (2 CPU)

We also leveraged the Redis Operator developed to manage all Redis clusters (currently released as Open Source), which deploys, alongside the cluster pods, an additional pod responsible for extracting a large number of metrics and exposing them to Prometheus, together with Kubernetes metrics, to be displayed in Grafana dashboards.

Let’s review the results obtained.

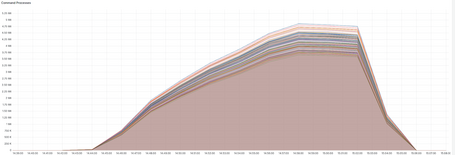

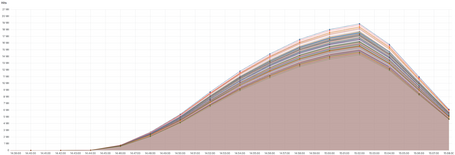

Processed Commands

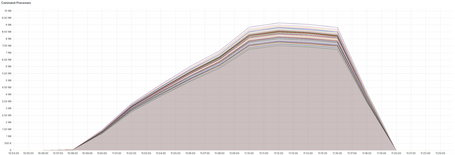

We can see how the cluster built with Redis 8 takes advantage of the io-threads configuration and the new implementation, nearly matching the number of commands processed per unit of time by the reference Redis Stack 7.2 cluster. Each node practically doubles the number of processed commands per unit of time compared to Redis Stack 7.2.

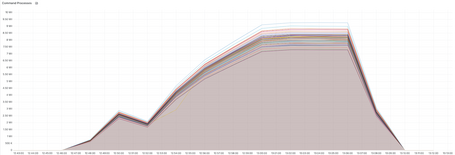

Valkey 8 shows behavior similar to Redis 8. Unfortunately, at the beginning of the test, one node pod was evicted, which explains the small drop visible in the chart.

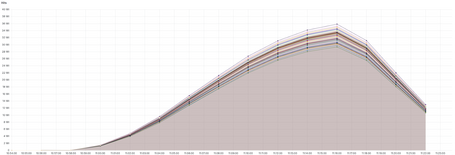

Hits

The same behavior observed for processed commands per unit of time is repeated in the number of hits. Both Redis 8 and Valkey 8 nearly achieve the reference performance of Redis Stack 7.2 using half the number of nodes.

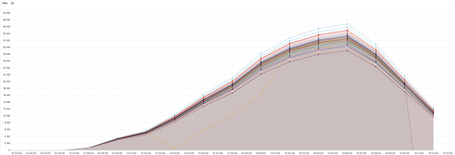

To provide an idea of the amount of data handled during the tests, the following charts show the number of keys stored per node.

Cache Entries

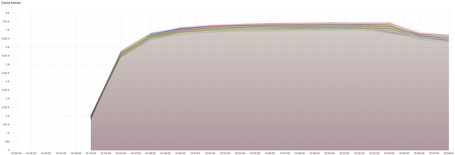

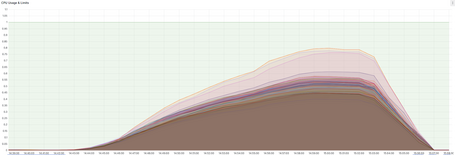

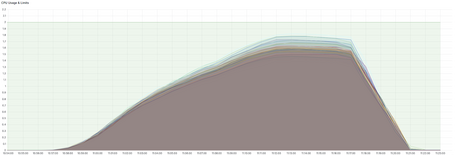

If we take a look at CPU usage, we see more consistent results in Redis 8 than in Valkey 8.

CPU Usage

To conclude the comparison of the collected metrics, let’s take a look at the network outbound throughput of the nodes.

Network Out Throughput

Final Conclusions

The new I/O threading implementation included in Redis 8 and Valkey 8 truly makes a difference compared to previous implementations. By using multiple threads to write command results to the socket, the load on the main thread is significantly reduced, enabling an impressive increase in performance per node.

From the test data, we can conclude that a performance improvement of 90–95% was achieved.

Using Redis 8, with the improvements it introduces and especially with its I/O Threading implementation, we can achieve a considerable reduction in resource consumption. After the tests, we were able to handle peak periods using half the number of nodes per cluster while increasing CPU allocation from 1 to 2 per node.

If we look only at the Redis metrics presented here (and several others omitted because they were less interesting), Redis 8 and Valkey 8 show very similar performance. However, we also reviewed logs and client application metrics. There we observed that Redis 8 behaved more consistently than Valkey 8. It maintained more stable throughput rates throughout the tests, while Valkey 8 showed some fluctuations.

Redis 8, after returning to the Open Source world, has demonstrated impeccable performance. It has also shown a higher level of optimization, in addition to behaving more stably and consistently under different io-thread configurations.

For all these reasons, we are sticking with Redis 8, at least for now.

References

Comments are moderated and will only be visible if they add to the discussion in a constructive way. If you disagree with a point, please, be polite.

Tell us what you think.