1.The problem of unstructured information

There is a lot of information in today's companies flowing from one computer to another like e-mails, documents, many kinds of files and, of course, the webs the employees surf through. These electronic documents probably contain part of the core knowledge of the company or, at least, very useful information which besides of being easily readable by humans is unstructured and impossible to be processes automatically using computers. The amount of unstructured information in enterprises is around 85% [2] nowadays, and such a situation is a disadvantage for business since processes are difficult to automatize and data is hard to find (well... unless a very well defined storage schema is set but anyways the success of that system relies on every employee following it). Unstructured data is that without a formally defined structure or a structure inherent to human communication but not prepared to be used by computers. As said before, examples are: text, web pages, images, emails and so on... To simplify, hereinafter we can say that everything that does not come from a database or an API it is unstructured data.

Scraping is the technique used for extracting data from these sources, and maybe the most common type is the so-called web scraping, used to get relevant information from sites on the Internet. scraping is very useful to extract information from documents or sources organized always in a certain manner. However, when the layout may change quickly over time or may differ to a large extent among different sources - as usually happens in the web - , scraping is an endless task. Once the desired data is extracted in a manner that the computers can process it as second problem is faced. Since documents are created by humans for humans, the information is written in what is called "Natural Language", the way we use to talk or write: human language. Hence, information is still raw and it requieres a processing step before the machines can manipulate it and do any kind of computation with it. There are many Natural Language Processing (NLP) approaches but at this point it's enough to know that this technique it's aimed to extract the meaning of texts (or even speech).

2 Unitex Corpus Processor

The Unitex software was developed at the Linguistic group (Prof. Eric Laporte) of the Institut Gaspard Monge, Université de Marne-La-Vallée and is a corpus processing system, based on automata-oriented technology. Unitex is able to perform several operations like:

-

Apply electronic dictionaries, that you can create ad-hoc for a particular domain.

-

Pattern matching with recursive transition networks.

-

Resolve ambiguity by means of the text automaton.

However, Unitex can apply advance operations too like ELAG (Elimination of Lexical Ambiguities by Grammars) for disambiguation between lexical symbols in text automata or Cascade of transducers (The prototype of the CasSys system was created in 2002 at the LI labs at University of Tours) applying one after the other onto a text to modify this text.

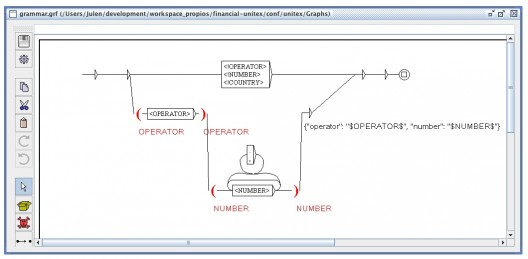

A very simple example of Unitex grammar is shown in the following figure:

Unitex has been applied in several research papers, e.g.:

-

Portuguese Large-scale Language Resources for NLP Applications

-

Syntactic variation of support verb constructions

-

XML-Based Representation Formats of Local Grammars for the NL

-

Spanish adverbial frozen expressions

Unitex provides a great User Interface to manage our Grammars and dictionaries but also a fast binding to perform specific operations onto a text is provided by Paradigma Labs.

3 UnitexManager

Unitex-manager is a python module which provides a high level layer to easily work with the above described Unitex Corpus Processor. Unitex-manager is based on pyUnitex, a minimalist python wrapper used as an interface to interact with the C interface of Unitex.

Natural Language Processing requires a first stage of language recognition and then a transformation of the whole text into simpler units, usually sentences. Hence text is tokenized first and then each sentence is pos-tagged, labeling words with its grammatical or/and its semantical function. For that purpose, different dictionaries are used; some of them are included with Unitex (basic language) but some of them (entities recognition, for example) should be prepared by a documentalist in advance. Finally, the tagged sentences run through a grammar (Unitex Graph) generating the desired output.

Unitex-manager interface contains three methods representing these three actions:

[java]

<div style="padding-left: 30px;">tokenizer(input_str, lang)</div>

<div style="padding-left: 60px;">Given a text and its language returns an arrray containing its sentences

splitted by the dot (".") character</div>

<div style="padding-left: 30px;">postagger(tokens, lang)</div>

<div style="padding-left: 60px;">Given an array of sentences (and its language) returns them same sentences

tagged with Part-of-Speech labels.</div>

<div style="padding-left: 30px;">grammar(tokens, pos, lang)</div>

<div style="padding-left: 60px;">Evaluates the given pos-tagged sentences with the grammar set-up

in the configuration file.</div>

[/java]

4 A practical case

To give an example of the use of Unitex-Manager we have prepared a practical case of unstructured information retrieval and processing. In this case, the evolution of the most active values during the day in the NASDAQ stock exchange will be followed.

First of all, it's necessary to find a reliable source of information. Financial information is widespread among a real mess of websites, however we have found that yahoo! Finance provides just the required information (here) already compiled and updates it very often. Once the information is found, is necessary to analyze its structure and prepare a web-scrapper. In our case, we created our own scrapper written in Ruby that is launched once in a while to extract the symbol and the name of the company as well as the last change.

This text is pased to the Unitex-Manager and processed with the workflow described above to extract the following entities:

- Company name

- Symbol

- Change

- Trend

Each our we extract this information to calculate the top five of most active companies in NASDAQ based on the absolute value of their growth and we tweet this Top-5 in the Financial Unitex account so you can easily follow how the stock exchange evolves.

Comments are moderated and will only be visible if they add to the discussion in a constructive way. If you disagree with a point, please, be polite.

Tell us what you think.