Recently, the methodology Test-Driven Development has become a way of working and a change of mentality in the IT world, but unfortunately there are always exceptions within this sector, be that due to mentality (“this is worthless”) or because of deadlines that hurry us (“this is a waste of time”).

We will try to explain by introducing what it consists of, what are its basic principles, what it means to implement this methodology and what advantages it brings us.

Ready?

What is TDD?

TDD are the initials of Test Driven Development. It is a process of development that consists of continuously encoding tests, developing and refactoring the built code.

The main idea of this methodology is to make initial unit tests for the code we have to implement. That’s to say, first we code the test and, then develop the business logic. It is a somewhat simplified vision of what it supposes since from my point of view it also gives us a wider vision of what we are going to develop. And in a way, it helps us to better design our system (at least, it has always been that way in my experience).

So that a unit test is useful and this methodology is successful, before starting to code, we need to fulfil the following points:

- Have well-defined requirements of the function to perform. Without a definition of requirements, we can’t begin to code. We must know what you want and what possible implications you can have in the code to develop.

- Acceptance criteria, considering all possible cases, both successful and error.

Let's imagine a system of high management of players tokens. What possible acceptance criteria could we have?

- If the player successfully registers a satisfactory message must be shown such as “The player with the ID “X” has successfully registered”.

- If a player is found with a duplicated ID an error message must be shown showing “The player with ID “X” has not been successfully registered as there is already a player with the same ID”.

- If any of the fields are left blank, a validation message must be shown indicating the field is obligatory or what formatting error is the cause of the problem.

- How we are going to design the test. To make a good unit test we must limit ourselves to only testing the business logic that we want to implement, abstracting ourselves in a way from other layers or services that can interact with our logic, simulating the result of said interactions [[([Mocks). Here there are always different perspectives, with their advantages and disadvantages, although in my opinion, in the end, it has to be the actual developer who must decide the most comfortable option that provides the most efficiency and information, as much on a technical level as on a conceptual level (although that enters into another type of conversation).

- What we want to test. The example given in point 2, gives us clues about what we should test before coding. Each casuistry for each acceptance criteria should have its associated test.

For example, if in the case of “validation error” is a mandatory field, we should make a test for this case. If it is a “format validation error”, we should make a test for this other case. - How many test are necessary? As many as the casuistries we find. In this way, we can assure that our cover of tests is strong enough as to assure the correct functioning of the developed code.

Principles on which TDD is based

Some of the principles on which TDD is based are the denominated SOLID principles. For those who haven’t heard of them, here is a brief description:

- The Principle of single responsibility or SRP (Single Responsibility Principle). A class or a module will have a unique responsibility. Robert C. Martín expresses the principle in the following way:

“A class should only have one reason to change.” - Open/closed principle (OCP). A class should allow itself to be extended with having to be modified. Given that the software requires changes and that some entities depend on others, modifications in the code from one of them can create undesirable collateral effects in cascade. For example:

% block:image

% image:https://www.paradigmadigital.com/wp-content/uploads/2017/04/TDD-3-1.png

% endblock - Liskov (LSP) substitution principle. If a function receives an object as a parameter, type X and in its place is another type Y (that comes from type X) said function should proceed correctly. If a function doesn’t fulfil the LSP it automatically breaks with the OCP principle given that to function with child classes it needs to know the parent class and how to modify it. For example:

The programmer class must function correctly with the Vehicle class or with any of its subclasses. The LSP is susceptible to being broken when situations as in the picture on the right occur.

% block:image

% image:https://www.paradigmadigital.com/wp-content/uploads/2017/04/blog.png

% endblock - Interfaces segregation principle (ISP). When we apply the SRP we also employ the ISP. The ISP defends that we can’t make the classes (or interfaces) depend on classes or interfaces that they don’t need to use. Such imposition occurs when a class or interface has more methods than those it needs for itself.

- The dependency inversion principle (DIP). These are techniques to contend with the collaborations between classes producing a reusable code, moderate and prepared to change.

Lifecycle

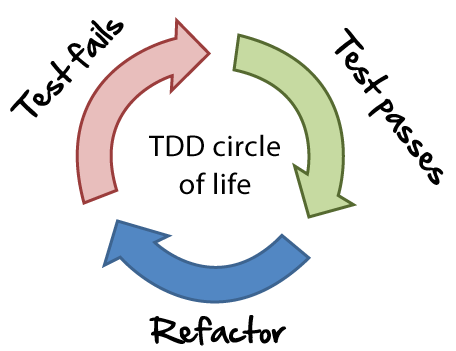

The lifecycle of TDD is based on a continuous coding and refactoring. How can we do this?

- Choose a requirement. You choose from a list the requirement which, in principle, we think will give us a better understanding of the problem and at the same time easily implementable.

- Coding the test. It starts by writing a test for the requirement. We need the specifications and the requirements of the functionality that are to be implemented to be clear. This step forces the programmer to take the perspective of a client by considering the code through its interfaces. Such and as we have commented in the previous point of the blog, we must have it clear what we are going to test and what philosophy we are going to carry out so that what we test is significant and shows that our development is correct. In our case, when we carry out a unit test we should focus on the business logic that we are going to test and not its dependencies.

- Verify that the test fails. If the test doesn’t fail it’s because the requirement was already implemented or because the test is wrong.

- Code the implementation. Write the simplest possible code that makes the test function.

- Execute automated tests. Verify if the whole set of tests functions correctly.

- Refactor. The final step is refactoring, which is mainly used to delete duplicated code, to delete unnecessary dependencies, etc.

- Updating the list of requirements. The list of requirements will be updated by crossing out the implemented requirement.

Consequences of introducing this methodology in a project

On the different projects that I have worked on throughout my professional career, I have found myself with the impossibility of introducing or encouraging the people in charge to change their work philosophy (not always, thankfully).

As I said at the start of the post, we have always found ourselves with the typical cases in which they don’t see any use in implementing said methodology. The “excuses” are always the same, from “this is worthless”, “we don’t have time”, “I’m not used to…” In general, and in my point of view, these phrases come from ignorance and the lack of vision at the time to see its advantages. I myself thought that at the start (when you’re young you say lots of silly things), but when you see it’s not like that…

In general, the projects where I have used TDD, it has helped me to detect requirements that the business was missing, to better design my business logic by separating components and layers (it helps in certain cases when a member of the team is very willing to dock in too much code), and prevent errors.

I do not know if it will have been good luck (although in our sector we know that normally luck isn’t on our side regarding complete development), but on these projects the number of errors has reduced drastically in comparison to others where I have not used TDD (there are also other factors, like the technical part, the organisation and the communication that obviously have an influence).

For the programmer, it is a huge change in mentality in their way of processing and managing information. It’s hard to get used to it at first, but there comes a moment in which their productivity and efficiency at the time of coding the test and developing the code in a simplified way increases and ends up very productive.

If a test is well written and well defined, we can almost assure that our business logic is correct and that what can fail in a possible incidence can come mainly from the data that the external systems provide us with. That is also a way of fencing in errors.

For example, not long ago I found myself a case in which the shipping costs of a purchase were €0. As I knew this was quite improbable that my development was the cause (since it was already well covered by the unit tests), I realised that the error came from an external cache that was refilling a series of tables, whose purpose was that of returning a series of necessary fields to calculate the cost.

In the end, you save time debugging and you quickly and efficiently focus on the possible problems. This translates as fewer maintenance hours and “diving” into the code so you can see what is failing. Something in which might have taken 1 hour, took only 10 minutes in deducing.

The main disadvantage that I see in this methodology is that it is not valid (at least in my opinion) for integrated test, since we need to know the data of the repository and verify that the content is that which desired after carrying out a system management transaction for a BBDDD (even in memory, which would be ideal).

For these type of integrated or functional test there are frameworks such as Concordion that offer interesting solutions, although that is a topic which we can talk about in another post.

So, what are the benefits TDD offers us?

- Better quality of developed code.

- Design orientated to necessities.

- Simplicity, we focus on the concrete requirement.

- Reduced redundancy.

- More productivity (less time debugging).

- Reduction in the number of errors.

Conclusion

I encourage you to try this way to develop your applications as much as possible, (in my point of view it’s worth it), or at least that you know it, because every day it is more widespread in our sector.

And of course, I do not have the absolute truth, because within each work team there is a discussion about how to carry it out in the best way possible and surely have missed many things, although a post at the end is reduced to trying to explain and develop in a simple way an idea based on the experience

Lastly, I recommend the book by Kent Beck, “Test Driven Development: By Example”, Very interesting material by one of the gurus on this topic.

Comments are moderated and will only be visible if they add to the discussion in a constructive way. If you disagree with a point, please, be polite.

Tell us what you think.