In a previous post, we were looking at how to centralize the Internet output in a centralized Networking model, and we commented that this type of centralization allows us to add more solutions and features to our environments. Today, we will go further, centralizing VPC Endpoints and allowing different VPCs to consume them transparently. It is one more small step in Centralizing specific network resources and has some attractive advantages.

What is a VPC Endpoint?

A VPC Endpoint is a resource that allows you to consume AWS services privately so that we consume the service using a private IP instead of connecting to the Public IPs of the service.

VPC Endpoints uses AWS PrivateLink to generate this private connectivity. Here are two types of VPC Endpoints, with interface endpoints being the ones we can centralize.

These endpoints generate a network interface within the subnets we indicate in our VPC. With this, our connectivity uses an IP within our private range.

This service has many advantages. The first and most straightforward is that connectivity occurs within the VPC; therefore, we don't need to use NAT Gateways or another Egress solution to communicate with AWS services.

Also, at a security level, it is crucial since, although we do not leave AWS, using public IPs generates certain reluctance in the Security teams, and sometimes all communication must be private to meet certain levels of Compliance.

Finally, we can also improve costs since the cost per processed data is much lower using VPC Endpoints than NAT Gateway or other solutions.

Even if we use Appliances for Egress, reducing the data that the appliance has to process towards AWS services is interesting since we reduce the load and, therefore, the computing (we can use smaller and cheaper instances).

Why centralize VPC Endpoints?

We have already discussed the benefits of the service, but sometimes, it takes work to use. It is an Infrastructure service that requires knowing which AWS Endpoints I will use and all the DNS names that the AWS Services use.

In addition, the service has a cost per Endpoint deployed, so the price of these can increase significantly in companies that use multiple Endpoints.

For this reason, using this service has many advantages if we have a centralized Egress solution or if we already have Transit Gateway configured.

- We can have cost savings by having a smaller number of Endpoints.

- If we offer the service as part of an AWS Account Baseline, all accounts will have the service automatically and transparently for projects.

- On the other hand, we increase security compliance by using private communication with AWS services.

Centralized VPC Endpoints Architecture.

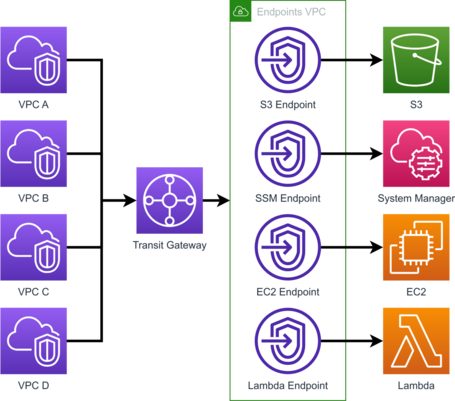

This architecture would use the Transit Gateway to interconnect the VPCs with a VPC in which the centralized endpoints are deployed.

It's a centralized solution that requires a Transit Gateway, so it only makes sense if you have a Transit Gateway in use or plan to deploy it for other use cases.

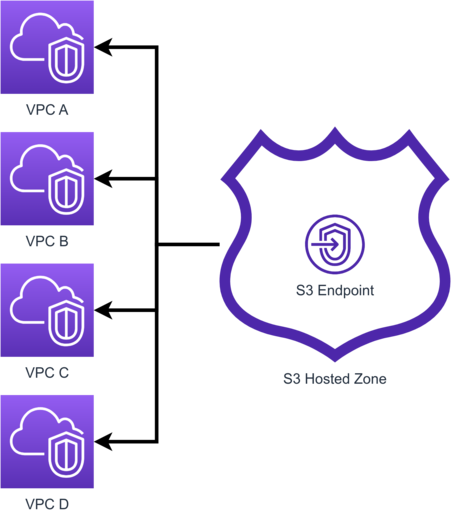

On the other hand, it requires route 53 for the resolution of the name of the public Endpoint to resolve our VPC Endpoint. For this, we must generate Hosted Zones with the names of the public endpoints associated with the VPC where we have the endpoints.

Within this hosted zone, we generate the required DNS records.

Now, let's look at the configuration in a little more detail.

Transit Gateway

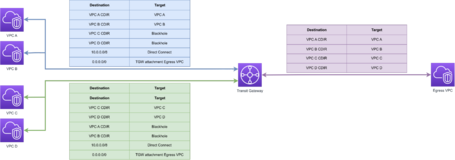

At the Transit Gateway level, we need the VPCs to see the VPC Endpoint Network and to do this, we simply need to generate the routes to communicate our VPCs with the VPC Endpoints.

In this case, we have three route tables, the first being a route table for VPC A and VPC B, which routes between them, blocks routing to VPC C and VPC D, and has a route to the Endpoints VPC.

The following route table is the same but for VPCs C and D, and finally, we have the VPC Endpoints route table that allows routing to all VPCs to have a return route to them.

VPC Endpoint

One of the advantages is that we can generate as many endpoints as we require, which is quite simple, as we will see below.

The first part of generating an endpoint is to assign a name and select the type of endpoint, which in this case will be an AWS service.

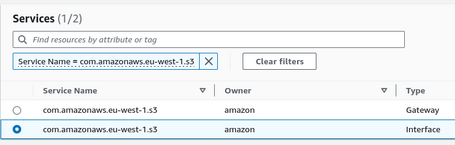

We have to select the endpoint, and we can filter by name. We will generate an Interface endpoint for S3 since, as we have mentioned, we cannot centralize Gateway endpoints.

Note: only S3 and DynamoDB can deploy Gateway endpoints and only allow their use from within the same VPC. Hence, we cannot centralize this type of endpoint.

The endpoints are regional. Therefore, we will only see the endpoints of the region we are deploying.

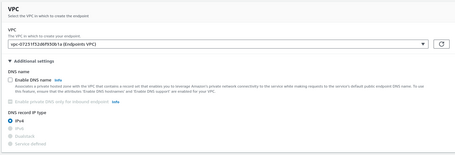

We select the VPC where we are going to deploy. We can generate the DNS name automatically, but this option is invalid to centralize endpoints since we will not manage the DNS Zone (AWS will generate this DNS zone outside our account). For this reason, we do not check that option.

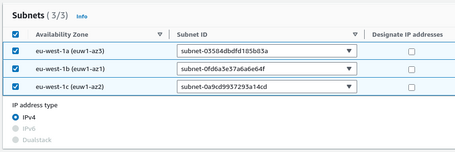

Once the VPC is selected, we select the subnets to deploy the endpoints.

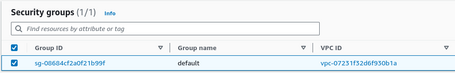

We also have to assign a Security Group, which must allow access to port 443 to access the service from the sources we define.

Note: calls to an AWS endpoint are on port 443.

Finally, we can generate a Policy to define who can use the endpoint via policy. We leave the policy as Full Access in a centralized endpoint, since we will not restrict its use.

Once finished, we will have the endpoint available.

Hosted Zones

We already have an endpoint deployed, but even if we communicate via Transit Gateway with it, we can only access it if we use the endpoint's DNS name.

Since we want all projects to use it transparently, we have to change the resolution so that when we use the DNS name of the service, it points to the endpoint we have generated.

The solution is to generate DNS zones with the names of the endpoints of the AWS services and create records pointing to the VPC Endpoints we generate.

In the case of S3, for the eu-west-1 region, there are 4 DNS names for this endpoint, so we have to generate the four hosted zones:

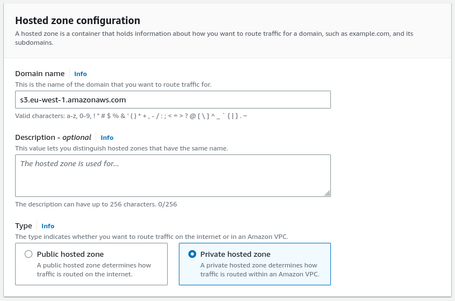

- s3.eu-west-1.amazonaws.com

- s3.eu-west-1.amazonaws.com

- s3-control.eu-west-1.amazonaws.com

- s3-accesspoint.eu-west-1.amazonaws.com

We have to repeat this process for each endpoint that we generate.

A hosted Zone is quite simple to generate, and we only need the name of the DNS zone and indicate that it is private.

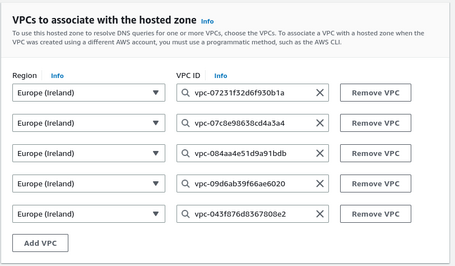

Suppose we generate private zones, as in this case, we have to specify the VPC that we will associate, which is our VPC where we have generated the Endpoint. Interestingly, we can associate more VPCs; in this case, we associate all our VPCs.

If we want to associate VPCs in different accounts, we must first authorize the association. We can do this from the account where we created the Hosted Zone, using the CLI command create-vpc-association-authorization or its same action via API.

Once we generate the association, we can perform the association from the account where the VPC is deployed with the CLI command associate-vpc-with-hosted-zone or using the same action via API.

Note: it is impossible to perform these actions from the console and execute them using IaC, but we will never see them in the console from the account where the VPC is deployed. It is only possible to view it from the API or CLI.

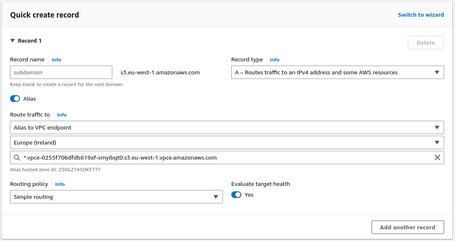

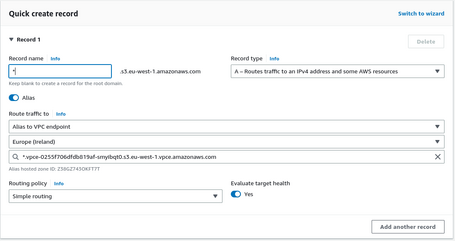

Once the zone is ready, we must generate the DNS records.

- First, a DNS A record of the name of the hosted zone pointing to the VPC Endpoint.

- Finally, we generate a wildcard DNS A record pointing to the VPC Endpoint.

Once we generate all the hosted zones and all the records, we will resolve the private IPs of the VPC Endpoints from all our VPCs. Because we have private communication via Transit Gateway, we can connect with these endpoints privately and securely.

If we generate DNS zones with the names of the endpoints, we do not need to perform any tasks since we will use the private endpoints from all the associated VPCs when we call the AWS Endpoints DNS names. This way, we guarantee that the solution is transparent for the different projects since it does not require modification. When we deploy the solution, all projects will use the VPC Endpoints automatically.

Conclusions

Deploying this solution via console is tedious if we deploy many endpoints, but implementing it via IaC is very simple. We can also integrate it into our baseline when we generate new accounts, which allows all projects to have this functionality transparently.

As a drawback, we can't have visibility of VPC Endpoints and DNS Names shared with us if we use the console (via API or CLI if possible). That is a problem because we need more visibility within a project that uses endpoints. However, if we test DNS resolution, we will see that they resolve the endpoints privately correctly.

Some AWS services may recommend private communication by deploying endpoints within the account without knowing we use centralized endpoints. For example, I have a problem with Airflow but use a workaround.

It is also essential to know that some endpoints are incompatible with this methodology. For example, it is not compatible with API Gateway or SFTP.

Since these services work from one to one, we must deploy the endpoint in the same VPC where we deploy these resources.

Otherwise, it is a handy solution to centralize part of the Networking and simplify deployments for projects in large environments.

There are many examples. One of them is to deploy the SSM Endpoint in an automated way for all the environments we generate. Thus, accessing the EC2 instances using Session Manager or Fleet Manager does not require internet access.

As an additional advantage of centralizing, we also save costs by using a smaller number of endpoints. In eu-west-1 (Ireland), an Endpoint in 2 AZs costs about $16 per month and $24 per month in 3 AZs. If we deploy ten endpoints in one hundred accounts, the cost can skyrocket to $16,000 or $24,000 monthly, depending on the number of AZs. In these types of cases, centralizing can reduce our costs.

In summary, this architecture is practical in environments where Transit Gateway is deployed and needs to use VPC Endpoints.

Comments are moderated and will only be visible if they add to the discussion in a constructive way. If you disagree with a point, please, be polite.

Tell us what you think.